Human Machine Interfaces

AnthroTronix is dedicated to optimizing the interaction between people and technology. We believe that, by improving human-machine interfaces, we can increase the benefits that technology can provide. Our intuitive interfaces help individuals directly connect to computers, robotic systems, and other programmed devices.

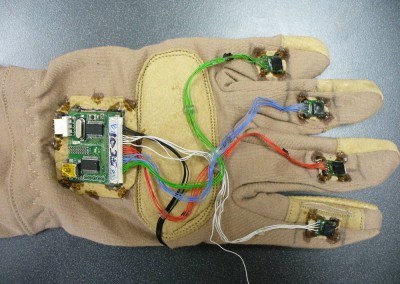

NuGlove™ Gesture Recognition System

NuGlove enables natural interaction with devices, platforms, and software systems software through intuitive gestures.

- Enables use of static and dynamic hand and arm-based gestures for command and control of devices, platforms and software systems

- Provides fine motor control and promotes psychomotor learning in immersive environments.

- Supports non-verbal, non-line-of-sight, communications

NuGlove itself is an instrumented glove comprised of a suite of Inertial Measurement Units (IMUs) with accelerometers, gyroscopes, and magnetometers to provide 9-axis detection. These sensors measure the orientation of different parts of the hand, as well as directional information, including azimuth.

Machine learning-based gesture recognition software processes sensor data from NuGlove to recognize pre-defined static and dynamic hand and arm-based (i.e. military) gestures and calculates azimuth for control and communications to devices, platforms, and software systems. The software converts the sensor values from each of its 7 IMUs into quaternions, relative to the mainboard IMU, and it compares the quaternions to previously saved gestures. Additionally, NuGlove can be used for direct movement control.

AnthroTronix developed NuGlove with SBIR and STTR funding from the Office of Naval Research, Army Research Office, and DARPA. It evolved from a previous product, AcceleGlove™, that AnthroTronix developed with funding from the Department of Defense, Department of Education, and the National Institutes of Health.

Please click here to learn more about NuGlove Projects

Please click here to learn more about NuGlove Applications

Please click here to learn more about NuGlove Technical Specifications

Mounted Force Controller

The Mounted Force Controller was developed to address the need for dismounted infantry to control and interact with items such as unmanned systems and computer software while maintaining their weapon at the ready. It is designed to replace the front grip of an M4, so it integrates easily with that weapon.

It consists of:

- 3 degree of freedom force sensing grip

- 2 axis thumb joystick

- Recessed finger buttons

- Selector dial at base

- Isometry: Senses input forces without moving